AI promised to help us through the pandemic. Has it?

Andrei Mihai

We’ve heard it a million times: artificial intelligence (AI) is a magnificent tool with the potential to revolutionize countless fields. Yet when it came to the pandemic, it was a bit of a cold shower.

Here and there, AI has helped society navigate the ongoing pandemic; in some cases, it proved to be an important puzzle piece. But it wasn’t the magical tool some had hoped it to be. Instead, the pandemic showed that when it comes to the really big challenges in our society, the technology might not be as mature as we hoped it would be.

If you’re reading this article, the odds are you’re at least somewhat familiar with the concept of AI — and let’s face it, who isn’t? Far from being a far-fetched futuristic technology, AI is already here, maybe not in the shape of smart robots, but rather in that of smart algorithms and statistics. AI is already a $10 billion industry forecasted to surge in the next few decades and spill into every imaginable aspect of society.

Naturally, when the World Health Organization declared a global pandemic, AI researchers and companies were all over it. Google’s DeepMind division, which developed the world’s firstGo algorithm that surpassed human players, produced a list ofAI-compiled protein reactions for SARS-CoV-2. The AI-focused drug discovery company Recursion released a morphological image data set of how infected cells react to thousands of drugs.

This is just an example. It seemed like everywhere you looked a new AI-related dataset would pop up and that AI was on the precipice of mastering the pandemic just like it mastered every game humans threw at it. Then, there was nothing.

You still need good data and trained people — two scarce commodities

It’s been over 9 months since the start of the SARS-CoV-2 outbreak, and we still haven’t seen the major AI pandemic breakthroughs that we’d expected. It’s not that scientists haven’t mobilized (there have been plenty of workshops, conferences, and collaborative efforts), but finding a global role for AI has proven challenging.

It’s not that there’s a lack of applications either. For instance, epidemic modelling, diagnosis and prediction of patient outcomes, and triaging are areas where AI can have an immediate impact. But the problem here is data, or rather, the lack of it. To develop predictive algorithms, algorithms must be fed high-quality data to model the desired scenarios. Sure, GPT-3 can output realistic, human-like prose from small prompts, but it is trained with datasets of half a trillion words. Meanwhile, COVID-19 is so new and complex there’s no real dataset that can be used. It is one thing for games like Go or chess where all of the information is available, but real-life scenarios are far less predictable.

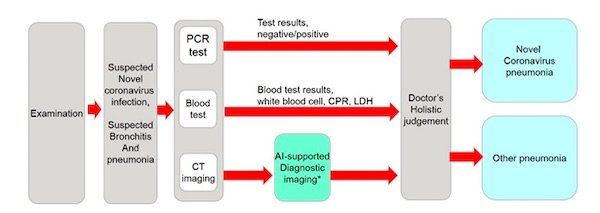

Training AI can also put more pressure on already-strained resources. It often “requires considerable human resources, which are precisely lacking in such context,” notes a commentary published in Nature by Peiffer-Smadja et al. For instance, computed tomography (CT) scans of the chest can be used to detect COVID-19 lesions, but it requires the input of trained specialists, who could otherwise be working on the front lines. Preliminary studies (typically based on a few hundred chest CT scans) suggest that COVID-19 can indeed be diagnosed with machine learning, but the application remains limited to small cohorts.

Another underwhelming situation came from the contact-tracing debacle. Here too, AI showed ‘promise’, but the end result was a mixed bag — to put it lightly.

“To date AI has had surprisingly little impact on the management of COVID-19,” summed another Nature commentary by Hu et al.

The pandemic has exposed large gaps between social expectations and what AI can actually do at this particular moment. But this might be saying more about our expectations rather than the technology itself. The pandemic hasn’t brought a day of reckoning for artificial intelligence, it’s brought a maturity test.

AI has its place in the coronavirus pandemic — but it’s probably not the one you imagined

What we’re seeing is that though AI is far from being a magic ingredient you can add to make everything better, it can instead work to solve rather mundane and often unglamorous tasks. It is ready to take the spotlight, but the conditions need to be suitable.

The majority of existing AI models aim to predict COVID-19 hospitalization and outcomes, using predictors such as age, gender, blood biomarkers, and imaging. This not only helps ease the load of doctors and policymakers, but it can help them make better decisions and save lives. Several companies claim to have AI solutions that are efficient at diagnosing the virus.

When it comes to drug and vaccine discovery, one of the holy grails of artificial neural networks, AI was used with effectiveness predominantly to help and enhance the work of statisticians and accelerate screenings for candidate treatments. Here too, the core idea was to quicken and broaden the scope of research, not to produce innovation on its own.

For AI to work, we first need to define what ‘work’ means. Has it been a silver bullet to curb the pandemic or find a cure? No, not really. But it has been a useful tool, especially when deployed locally and pragmatically. With more global data sharing and collaboration, the impact of AI models can be further developed but we’re still only starting to see what the technology can do.

This isn’t the first pandemic we’ve faced and it’s unlikely to be the last. For the first time, however, we can deploy a coordinated, evidence-based, global public health response. AI does have a role to play in this response, but so far, it’s not a central one.

The post AI promised to help us through the pandemic. Has it? originally appeared on the HLFF SciLogs blog.